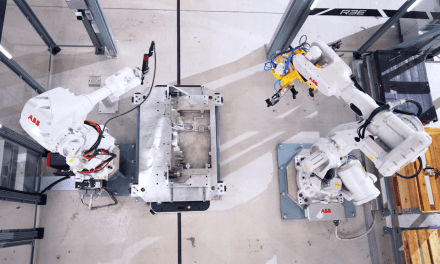

New York University (NYU) has collaborated with Toyota subsidiary Woven Planet Holdings on a project that promises to help both visually impaired pedestrians and autonomous vehicles to better navigate complex urban settings

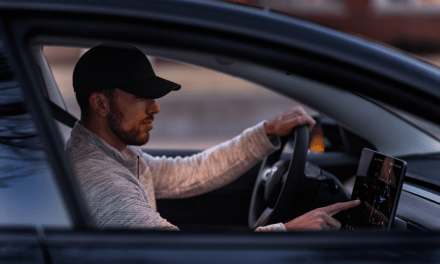

Woven Planet partnered with NYU Tandon’s Visualization, Imaging and Data Analytics Research Center (VIDA) to compile a dataset of more than 200,000 outdoor images which is now being used to test a range of visual place recognition (VPR) technologies. The aim is to improve the accuracy of personal and automotive navigation applications, thereby promoting independence for a variety of users, be that autonomous vehicles or visually impaired pedestrians.

Developed by a team from the Automation and Intelligence for Civil Engineering (AI4CE) lab led by Chen Feng, assistant professor of civil and urban engineering, mechanical and aerospace engineering, and computer science and engineering, the dataset uses side-view images of sidewalks and storefronts in addition to forward-facing imagery, allowing researchers to test more applications than traditional mono-perspective sources. The data could also help improve delivery robotics, which must move forward and back as well as side to side to reach homes and businesses.

“This is the first work to systematically analyse some of the biggest challenges of visual place recognition,” said Dr Feng. “We believe we are the first to make such data available free for education and research purposes, which is critical to diagnose and solve pressing problems with visual place recognition. Vast datasets like this one from Woven Planet can provide critical variety and diversity to inform data-driven systems and speed machine learning at scale.”

Researchers at NYU led by John-Ross Rizzo, professor of biomedical engineering, and mechanical and aerospace engineering at NYU Tandon, and Vice Chair of Innovation for Rehabilitation Medicine at the NYU Grossman School of Medicine, are already using the dataset to help develop technologies that will help visually impaired individuals better navigate complex urban environments.

“As a visually impaired person myself, I’ve long been frustrated that our population hasn’t seen more innovation in the navigation space; sure, solutions exist, but apply them in our urban canyons and accuracy, precision and reliability are all compromised,” said Dr Rizzo.

“Image-based wearable navigation assistance is set to make significant breakthroughs for everyone from the blind to the cognitively impaired to the elderly, helping with safe navigation in congested, complicated and often dangerous outdoor environments and also in unfamiliar indoor environments. Ultimately, this project has the potential to redefine accessibility, helping millions of people expand their horizons and better interact with the world.”

This project, which is being sponsored by C2SMART Center (the Connected Cities for Smart Mobility Toward Accessible and Resilient Transportation) — a USDOT Tier 1 University Transportation Center led by NYU Tandon — uses images originally provided by CARMERA Inc., an automotive mapping company and former participant in NYU Tandon Future Labs that was acquired by Woven Planet in 2021.

“NYU has long been one of our core academic partners, in no small part because of our shared commitment to delivering social impact through mobility,” said Ro Gupta, senior director at Woven Planet and head of the company’s Automated Mapping Platform North America team. “It’s gratifying to see our data, which is core to our commercial mapping products, being used to help researchers around the world develop tools that will ultimately make mobility more accessible and equitable for all.”